We are asking the wrong questions about AI and disruptive technologies

Why I think asking about the axiological weight of AI advancement is almost practically irrelevant

Unfortunately for me, I'm sort of chronically online on X. I find myself enjoying the information [misinformation] overload I get from the app. Lately I've been seeing so many posts about AI, and most seem to border on mass hysteria about AI, while others fight back with radical optimism. I find the whole conversation polarising, as most conversations on X are, and I think we should all take a deep breath (easier preached than practised, I know).

I specifically do not have the academic authority to make the claims I am about to make, but regardless, I'm ready to share them. I believe we should restructure how we view AI and perhaps technological advancements at large. The few (bold?) claims I'm making are:

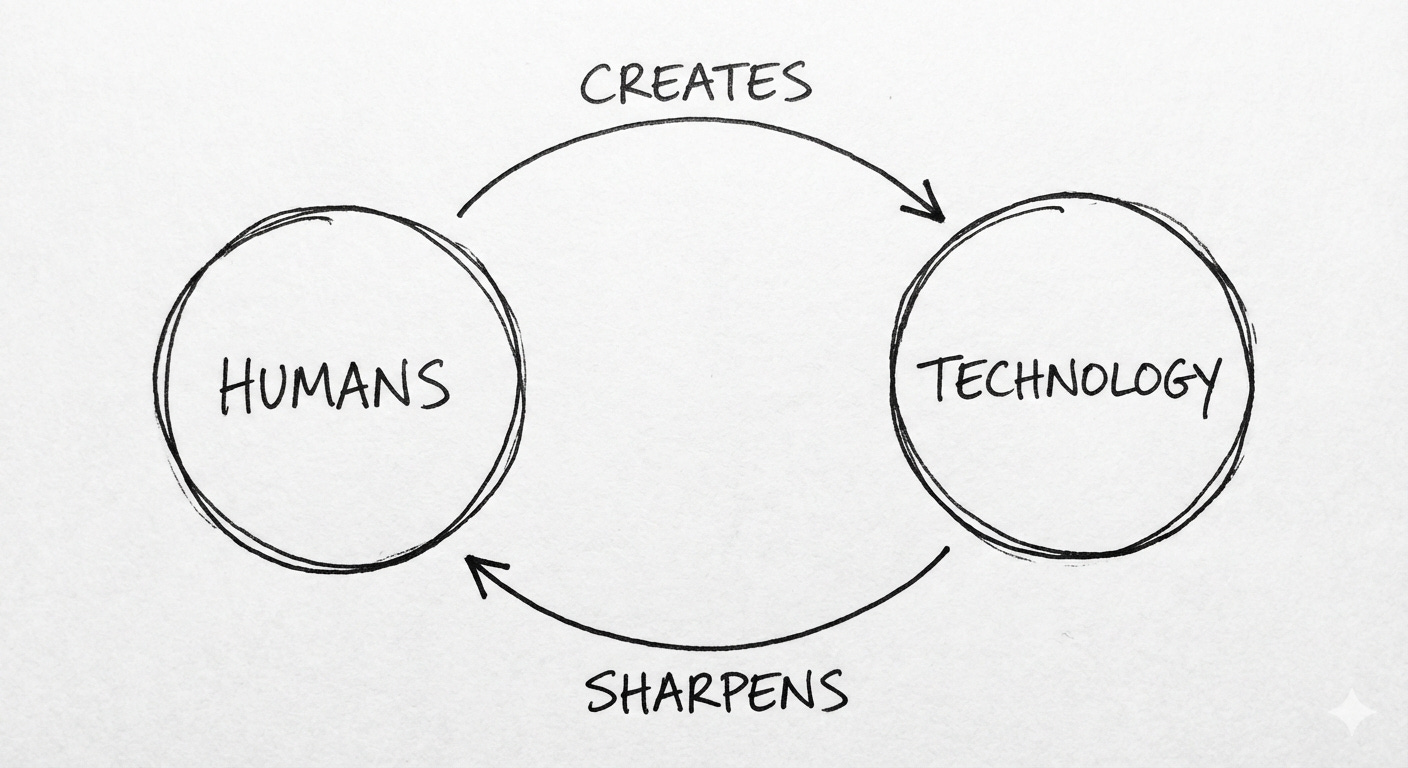

Technology is not an external concept that can be separated from human evolution; instead, it is a transformative extension of human beings and our collective will.

Technology, as the human hand is axiologically amoral in itself. Even though it is functional, moral value can only be outsourced.

Technological advancement isn’t good or bad, just transformative

Everyone should be concerned with AI ethics, legislation and governance.

You've probably read these points and wondered if they are actually bold. Well, I don't know myself, but the last time I wrote something as mild as calling people to epistemic justice, I was called Hitlerian and an anti-intellectual. So truthfully, anything can be bold at this point :)

Now let me build on the claims I made earlier. Yes I’ve always posited, even though crudely, that technology is just a transformative extension of humans and our collective will. It’s an entity created by us, which in return sharpens us as we create more technology; a necessary feedback loop which has been the driving force of human civilisation.

Now, if technology is an extension of us, can we then say technology has the intrinsic axiological value that humans, by virtue of our dignity, have?

Well, no, at least not yet. Right now, I’d ask: does the human hand have the capacity to be classified as good or bad intrinsically? I’d argue no, it doesn’t. What it does is fulfil the intentions of our minds. This means that when a person writes a good book or strangles another individual, it’s not the hands that bear the moral weight of the action, it’s the person. In this light, we can say technology acts the same way: as a tool and amplifier that is functional but amoral.

Of course, this posit breaks down immediately when technologies themselves no longer outsource decision-making to us and become independent and sentient. They are no longer amoral; instead, they become moral agents.

This is the AI question. If tools are as sentient as their users, are they still tools, or do they become co-agents? This is one of the many questions inspiring me to become a student of AI ethics and epistemology, as I cannot answer them yet, however curious I may be.

So you’re at this point of the writing wondering what message I’m trying to pass across. After all, AGI breaks the amorality of technology, which in return validates the hysteria surrounding AI development and adoption, so what truly is the point?

Well, this is where I put forward another point: disruptive technological advancements are not largely net-positive or net-negative. They tend to be net-zero, or slightly(negligibly) net-positive or net-negative. What they tend to do is revolutionise, transform our lives and shift the zeitgeist across politics, culture, economics, and more.

In the same way nuclear technology brought, and continue to bring, us ever closer to non-existence, it created a system of deterrence that revolutionised warfare

forever while also promising a cleaner and low-carbon source of energy.

In the same way the internet revolutionised the world with a level of connectivity and knowledge democracy never experienced before, it also gave us exposure to an unprecedented level of polarisation and mass misinformation. If we look back through all disruptive technologies, including the discovery of fire, we can see this pattern.

Can we then say AI and future AGI advancements will be transformative and radically change the world? Yes, absolutely. Will it be net-positive or net-negative? Even though we cannot truly tell, I'd argue it will be net-zero, as with other disruptors before it.

Now, if we cannot truly tell or predict the outcome, then why open Pandora's box in the first place? Well, because it's what humans do, we open Pandora's box all the time. Driven by curiosity? Lust for power? Hope? Whatever it is, we will build and continue to build, and that's a natural fact. It is this ingenuity that pushes the boundaries of what it means to exist as humans.

So what's the point of the entire rambling?

Well, the point is this: if human ingenuity cannot be stopped (even if suppressed by legislation), if technological advancements are hardly net-positive or net-negative, and if human beings are ever closer to creating intelligent systems that match and will exceed our intelligence and agency;

Then we should be prepared in a pragmatic way, neither naively optimistic nor irredeemably cynical, for the next phase of our collective civilisation.

We should be ready, equipping ourselves with the tools to navigate this new emerging era: creating frameworks to hold bad AI usage and implementation accountable and celebrate responsible ones; voting for governments that will responsibly adopt AI, and democratising AI ethics and legislation. This is what our preoccupation should be, how to form frameworks of human survival and continuity as intelligent systems emerge.

Good piece

I believe the first step is for us to collectively work towards AI being democratized, open sourced and Local, where everyone is able to access this Technology without Cloud inferencing and paying rent to the Technocrats

I think that's a bit too complacent tbh given that for the first time we are forced to confront a thinking technological creation,one that doesn't sit still waiting for human input to perform actions. Yes ,we currently are still at rudimentary level in AI metamorphosis with AGI still speculatively a pipe dream but ASI is the actual goal which you didn't reference so with ASI in view it is actually valid to enter panic mode as it's not a sci-fi adaptation,we truly have no clue what is possible if we develop autonomous beings, like true ASI,ethics and stuff are just boundaries it will evolve past like we humans constantly evolve and change but it does beg the quite befuddling question of why we'd create something or aspire to develop something that could spell our doom, in very rational terms not cynical or hyperbolic sense,quite literal sense, but as you've concluded it is indeed inevitable that we create even if it's our own euthanasia pods